Dance Diffusion KGUI

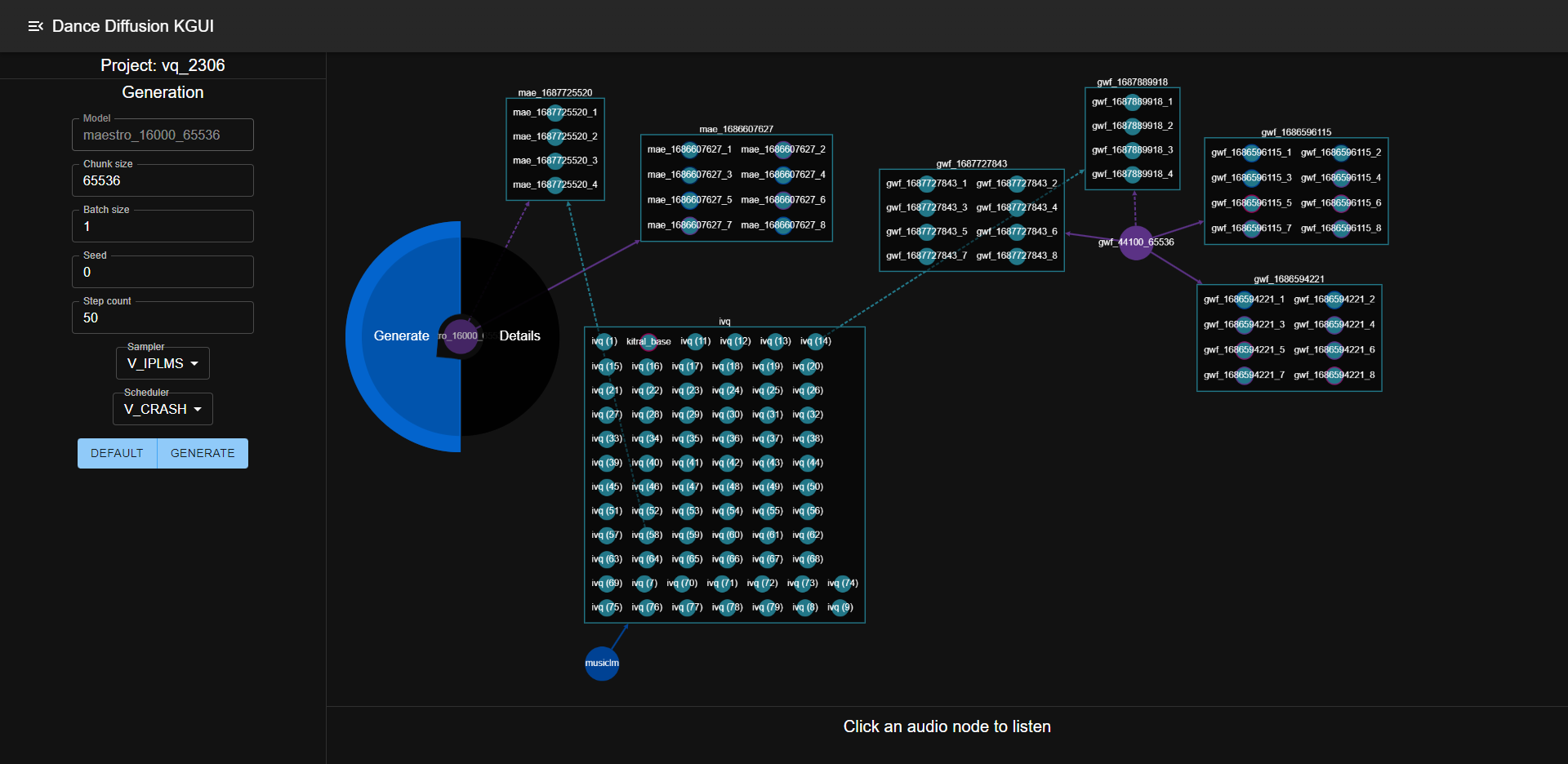

A knowledge graph ui and logging system for Dance Diffusion models based on the sample-diffusion cli

The motivation is both to make an interactive ui for using dd models and provide a tool to curate datasets for fine-tuning. The point of mapping data points to a knowledge graph is to keep track of all the relationships between them and make it easier to do any RLHF-related stuff you can imagine like adding tags/captions, ranking samples in a batch, describing the transformation of a sample during variation or manual editing, etc.

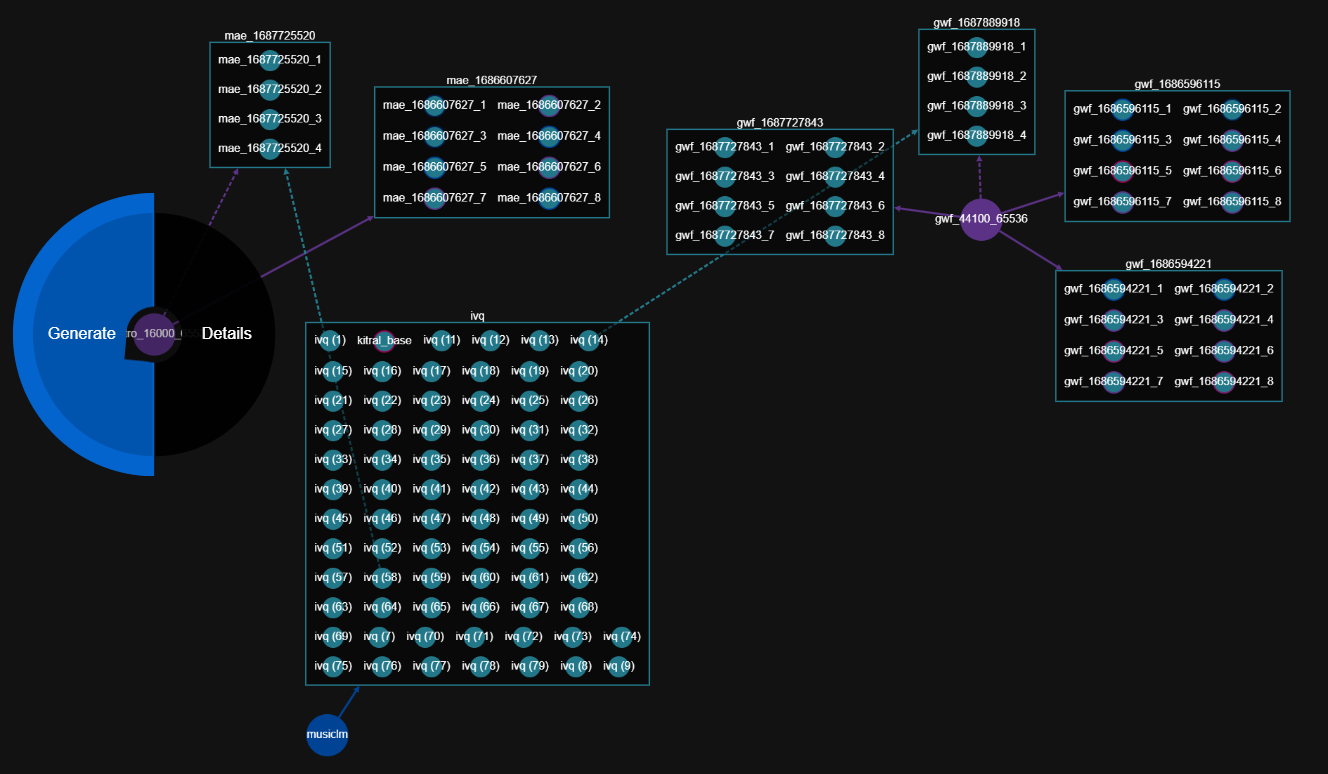

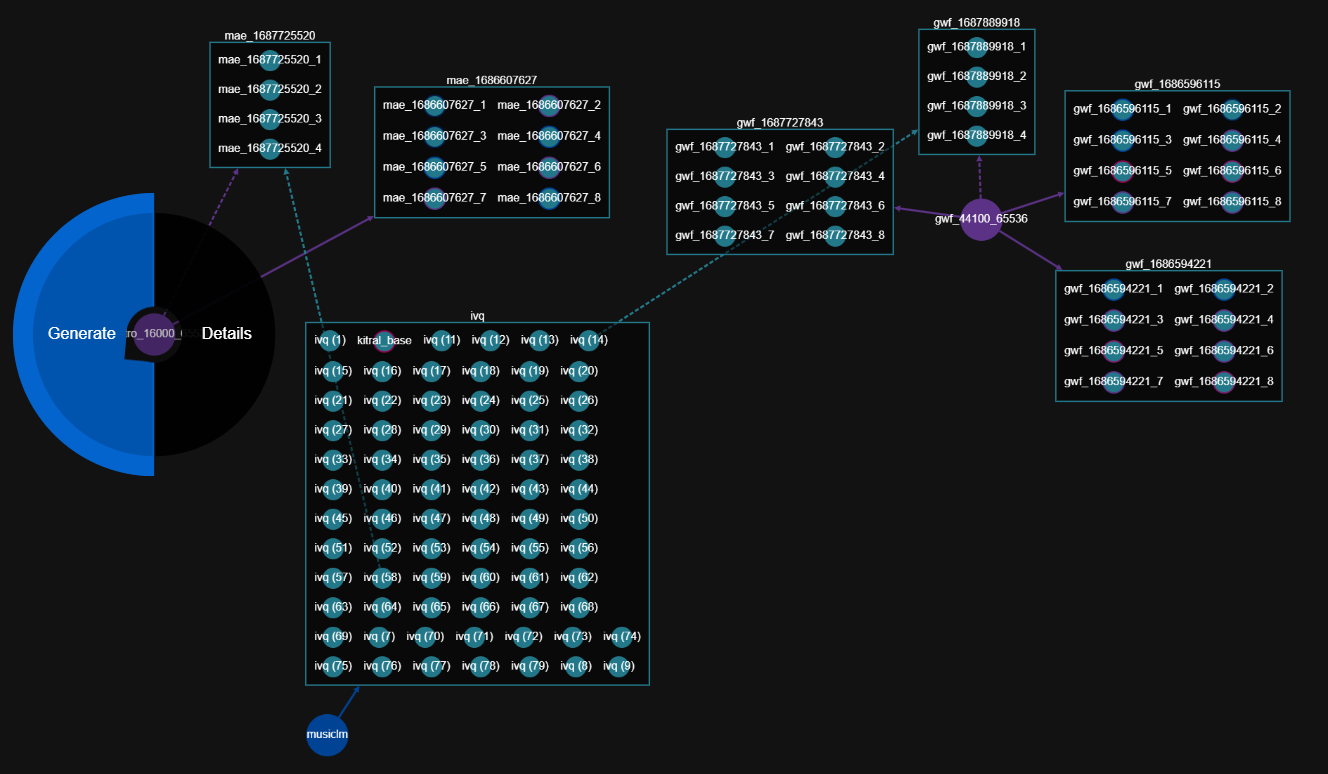

Elements can be dragged around and automatically arranged using fCoSE

- Purple nodes: models

- Cyan nodes: audio samples

- Boxes: output batches

- Blue nodes: External audio source directory

- Arrows: generation (dashed lines are for variation - purple from the model and blue from the audio source)

- Dance diffusion generation and variation

- Models, external sources, and audio files represented as interactive nodes

- Audio can be played from the ui

- Samples can be tagged, rated, and captioned

- Batches/datasets can be collapsed

- Additional inference modes (interpolation, inpainting, etc)

- Search and filter

- Export filtered datasets for fine-tuning

- Representation of custom processes

- Training of auxilliary models for reinforcement learning with human feedback